In this post, we share one of our favorite “pet projects”.

We first heard about the “parrots of telegraph hill” looking for things to see in the city. But after a couple years, we never managed to run into one of these accidentally re-wilded parrots.

Eventually, we moved to a new apartment where we could hear their distant squawks and occassionally see a small flock of the cherry-headed conures. Then, we began to notice a shadow going by the window and figured out that a pair of parrots were actually roosting next door!

Despite living so close, it was difficult to get a good view. Everytime we approached the window, they would take off so we set up cameras to view them without the disturbance.

In time, we earned their trust by putting out bird seed, even being able to hand feed them apples.

But these little parrots have good reason to be on guard as raptors are always on patrol.

Besides the safety in numbers, these birds are pretty good at disguise!

After much observation, we learned to recognize each by their markings and behavior.

Finally, as the chirps emanating from the nest grew louder, we even had the chance to spot a chick!

At the same time, we were inspired by the perspectives of modern animal documentaries, which have been greatly enhanced by embedded cameras. We dreamed of the views these animals must enjoy of our city!

Fascinated by these critters, we tried getting closer with embedded cameras like the arduino portenta.

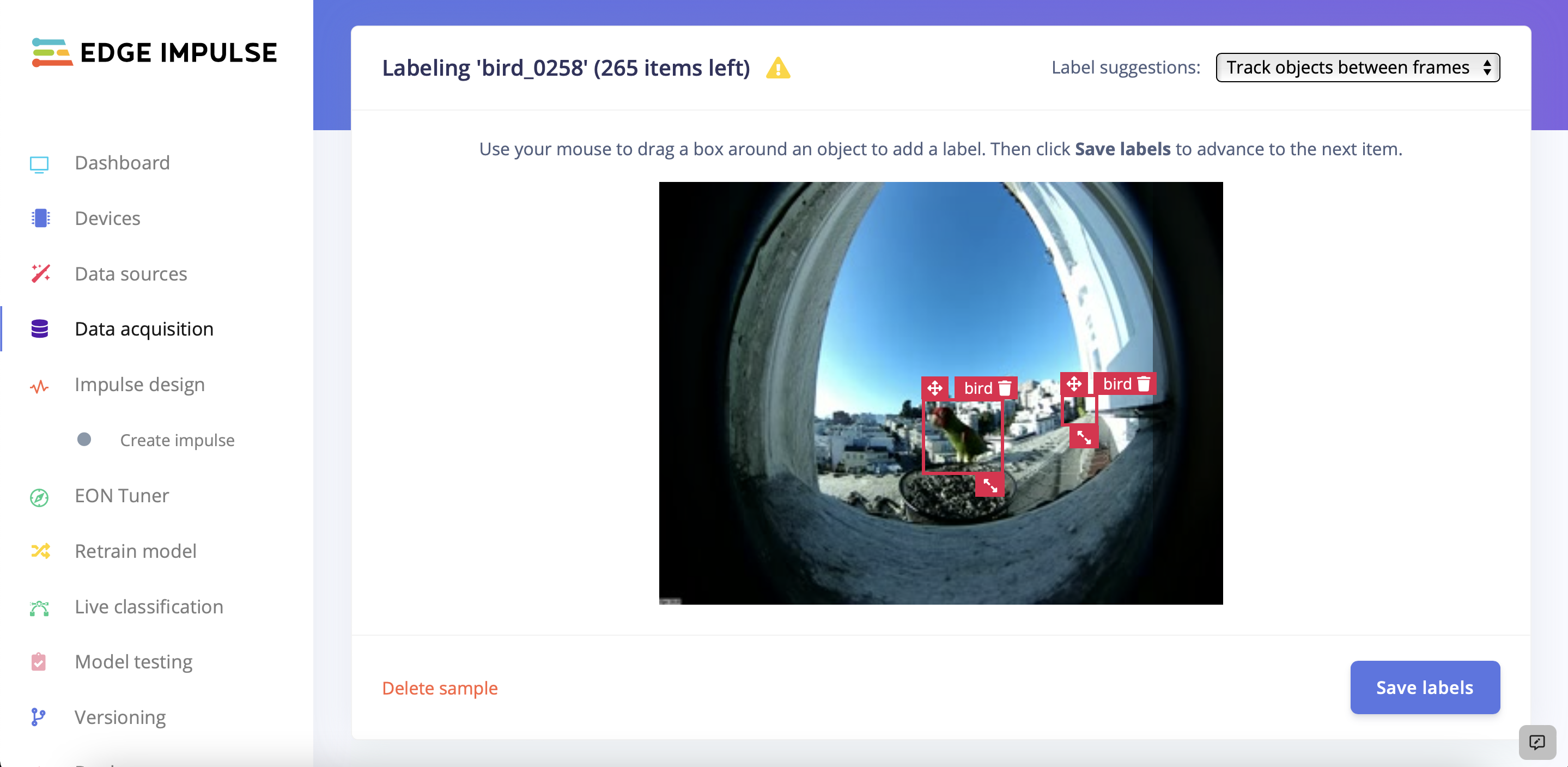

We also set up depthai cameras with wide angle lens to detect & track our parrots.

With our feathery muses, we explore fast detection from the arduino.

Using the depthai camera, we can measure distance by calculating depth from stereo disparity.

But with a 7.5 cm baseline, we needed to try something else if we want to estimate distances greater than a few meters.

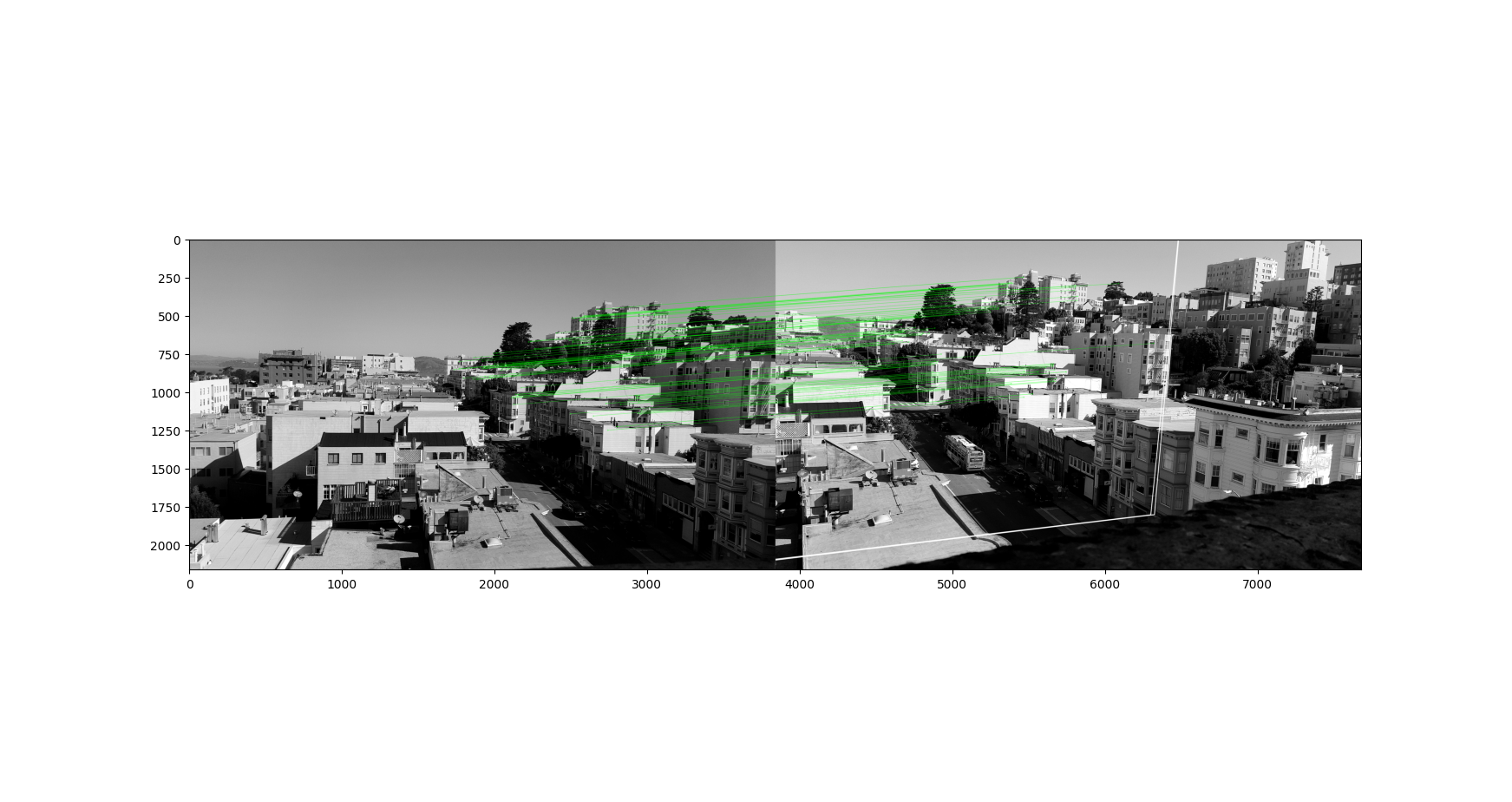

Recently, we’ve been experimenting with multi-view stereo especially using wide baselines. After searching around, we devised a plan to add long-range stereo vision for early bird detection.

Refer to Learning OpenCV for more details about calibrating stereo cameras. Generally, one performs calibration with a checkerboard but instead we use Hartley’s method and find keypoints with SIFT.

At a high level, by identifying points from each view, we can estimate the difference in camera pose. From this info, we can find the homography which warps one view to align with the second. This simplifies a search to match pixels and calculate the disparity which we use in reconstruction.

By adding a second 4K camera we can extend the depth range by tens of meters with a baseline as wide as a bay window!

Even though we can estimate distance at longer ranges, detecting our stereo parrots is still quite difficult! But researchers are applying similar techniques to protect migrating birds from wind farm turbines.

Up close, they are recognizable by the distinctive red-green pattern but at a distance, color is less discernable.

Instead, we humans recognize the parrots by their distinctive call as well as their shape and motion as they fly. We can try temporal stacking of three successive greyscaled frames as done to detect birds in flight.

In Chapter 12 of Learning FPGAs, the author guides us in building a sound direction detector with an array of microphones! We can add this component to focus our multi-camera system.

We’ve also been experimenting with visual servoing using a pan-tilt-zoom camera for tracking.

Perhaps we can use sound direction detection to focus our PTZ camera or make use of the Skagen & Klim datasets for more powerful parrot detection. Stay tuned for updates!