Sometimes, we succeed applying transfer learning with relatively few labeled samples to develop custom models. However, there are times when the cost of acquisition is so great that even having a few examples to learn from is difficult.

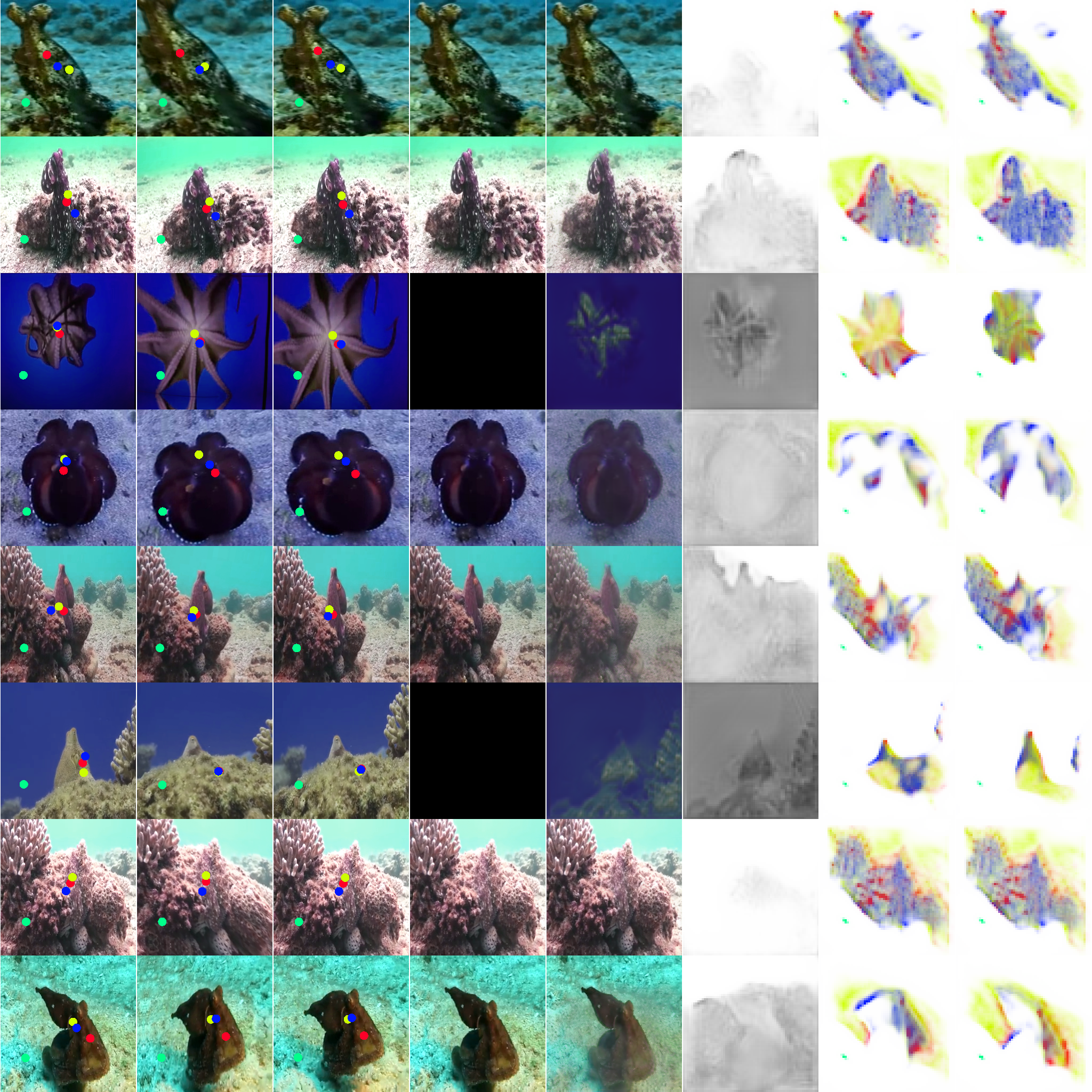

Scientists curate databases like FathomNet to share expertise about the ocean’s wildlife. Applying machine learning to classify marine species is quite challenging in practice due in part to rarity of encounters and challenging photographic environments.

My Octopus Tracker

The octopus is one of the most difficult animals to film because of its intelligence and sophisticated camouflage. These animals have an amazing capacity to quickly change shape, color, and texture.

Despite this challenge, films like “My Octopus Teacher” document complex behaviors learned over the course of daily interactions. But even given the narrarator’s skill and commitment, it was difficult to maintain consistent and sustained interactions to learn about the animal.

Typically, biologist may set up “camera traps” to record activity captured within the field of view for cameras deployed into wildlife territories of a target animal. This method allows the scientists to observe with limited influence on the animals behavior.

We are interested in applications like these, where perception systems can aid scientific investigations into complex animal behaviors.

Rendering Synthetic Samples

With limited training samples, researchers have turned to simulation to develop powerful recognition systems to support their studies. Training models on synthetic data can even outperform models trained on real data.

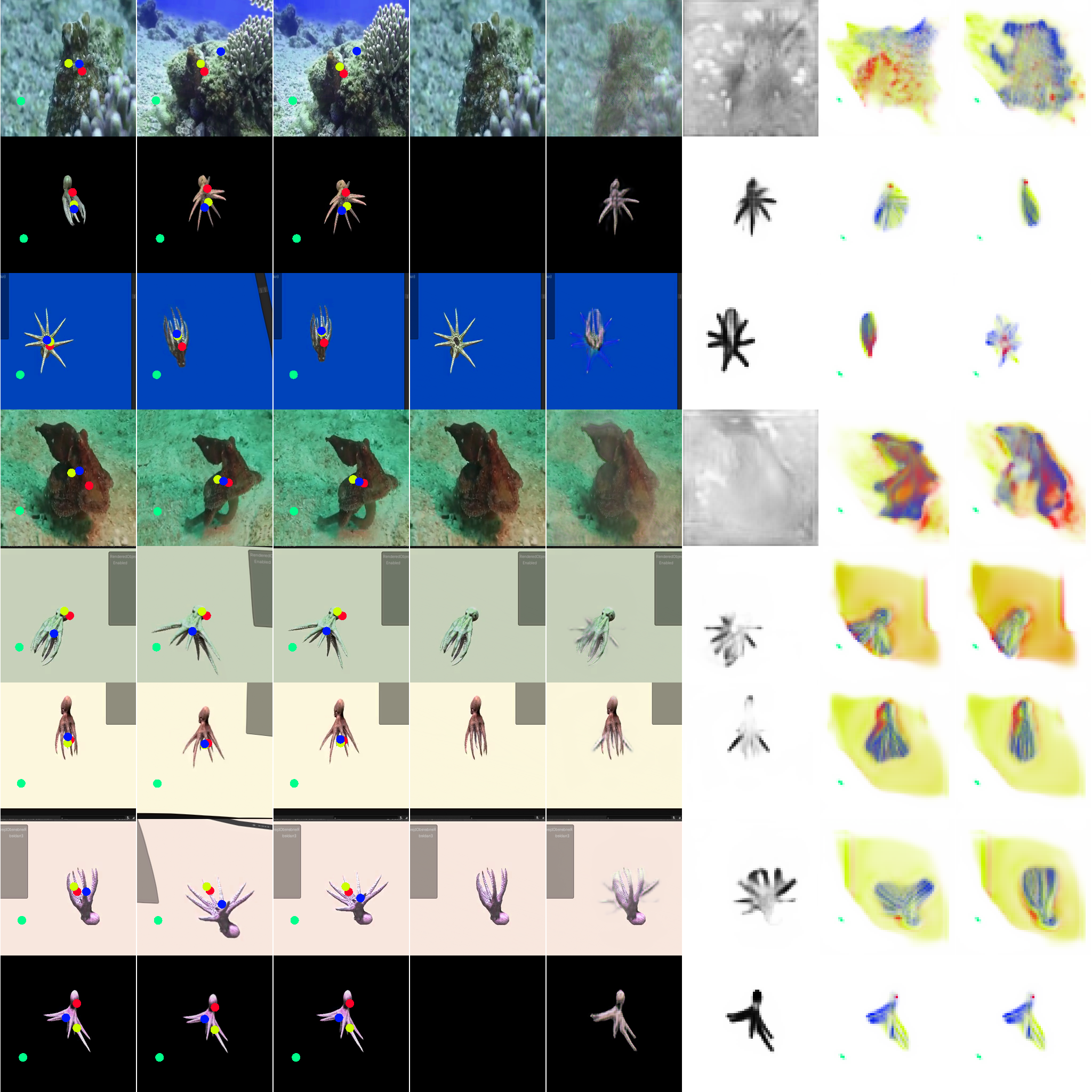

Generating synthetic samples can be especially helpful in gathering ground-truth labels for tasks like semantic segmentation or keypoint estimation, where the cost of annotation is considerable. Likewise, we can cheaply render video from many perspectives using tools like Unity.

For the purposes of applying synthetic data to develop our perception algorithms, its easiest to buy an existing 3D model of our target class. Some models also include kinematic models and realistic animations.

We found this survey on synthetic data helpful in considering strategies to manage the gap between real and synthetic data.

From here, Unity plugins make it easy to import your new 3D asset and the perception package tutorials make a great entry point to learn about synthetic data.

Combining techniques learned from the above tutorial with those from another, we can apply randomizations to the lighting and orientation of our asset and render animations to learn from.

Learning Keypoints from Motion

With many more samples to learn from, we apply articulated animation to the task of learning motion representations for our octopus target.

Training with synthetic data gives the practitioner more control over the distribution which can be especially helpful to improve performance around hard cases. We are able to train on the dataset augmented with synthetic samples before removing these to fine-tune on our limited collection of real videos.

Next Steps

We find rendering synthetic samples appealing for low-shot learning and improving model robustness around difficult edge cases for which we have limited access to training samples.

We’ve experimented with randomized orientation, lighting, animation speeds, and background colors but we plan to explore randomized textures and even motions.

For a simple demonstration, we render varations on our original texture using an online style transfer app.

The barracuda project features the ability to apply neural rendering and techniques like 3D StyleNet look like exciting directions in this line of work.

Synthetic data and simulated environments can be especially powerful in developing applications for which the cost of failure during learning is too great.

That’s why we’ve begun exploring Unity for the developement of RL agents. Unity has a great introductory course on ML Agents in Unity.

This example uses Unity’s warehouse generator and ROS-TCP Connector to explore robotics applications like SLAM-based navigation.