Make sure to check out the databricks notebook which complements this post!

Modern internet companies maintain many image/video assets rendered at various resolutions to optimize content delivery. This demand gives rise to very interesting optimization problems.

Groups like Netflix have even taken steps to personalize the images presented to each user, but as they describe, this involves subproblems in organizing the collection of images.

In particular, researchers described extracting image metadata to help cluster near duplicate images so they could more efficiently apply techniques like contextual bandits for image personalization.

Computer vision techniques show promise in content-based recommendation strategies, but extracting structured information from unstructured data presents additional challenges at large scale.

Measurements using SSIM can help in deduplicating an image corpus. SSIM is a full-reference metric designed to assess image quality after compression, also see VMAF.

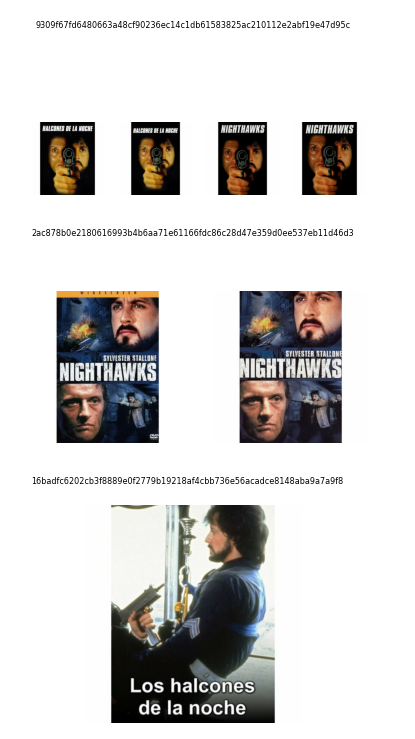

We recently acquired a large corpus of theatrical posters, many of which were near-duplicates. In this post, we show a simple deduplication method using hierarchical clustering based on SSIM.

Here, we create a custom udf to cluster images used in databricks notebook.

@udf(returnType=T.ArrayType(T.IntegerType()))

def image_cluster(image_list, threshold=0.5, img_shape=(300, 300)):

"""Applies AgglomerativeClustering to images after computing

similarity using SSIM

Parameters

----------

img_lst : Array of images

A group of similar images as bytestrings to deduplicate.

threshold: float

The `distance_threshold` in AgglomerativeClustering.

img_shape: tuple of ints

Image shape to compare reference and test images using SSIM.

Return

------

List

Return an List of Integers indexing per group cluster id.

"""

if len(image_list) == 1:

return [0]

clustering_model = AgglomerativeClustering(

n_clusters=None, distance_threshold=0.5, linkage="ward"

)

frames = [url_to_numpy(img, img_shape) for img in image_list]

clustering_model.fit(

np.array([[ssim(f, ff) for ff in frames] for f in frames])

)

return clustering_model.labels_.tolist()

We also use metadata to apply this udf to small groups of images related by common IMDb id. After merging near-duplicates, we can choose exemplar images for downstream analysis.

Check out our databricks notebook to dedupe our Spark dataframe of images.