Repo for this project here!

A seasoned gardener can diagnose plant stress by visual inspection.

For our entry to the Tensorflow Microcontroller Challenge, we chose to highlight the issue of water conservation while pushing the limits of computer vision applications. Our submission, dubbed “Droop, There It Is” builds on previous work to identify droopy, wilted plants.

Drought stress in plants typically manifests as visually discernible drooping and wilting, also known as plasmolysis, indicating low turgidity or water pressure. Naturally, low water pressure in a plant can result from rapid transpiration and affects nutrient transport.

Schedule-based irrigation is simple but cannot adapt to the visual context of plant stress. The burden remains on the gardener to adjust for changing demands to limit waste and damage due to suboptimal watering.

Plant monitors are popular as hardware projects and typically introduce additional context for smart irrigation using the soil moisture sensor (YL-69). Here instead, we use on-device computer vision models run on images sampled from a camera feed.

The visual approach is less invasive and can be deployed on minimized hardware with greater mechanical simplicity. Though computer vision remains largely task-specific, high performance image classifiers are attainable while training neural networks with transfer learning.

In this update, we apply techniques like knowledge distillation (KD) to reduce the footprint of our model. While the original POC ran on the 3.3V Pi Zero, this update shrinks the model enough to fit on the battery-powered Arduino Nano 33 BLE Sense!

A Bit About the Board

We consider the Arduino Nano 33 BLE Sense a fantastic platform for prototyping edge AI projects.

A powerful processor along with all the popular interfaces helped us to demo the MuttMentor, which combined keyword spotting and action recognition to demonstrate a “smart” dog clicker. We’ve even attached a camera to perform person detection with tensorflow lite image classifiers!

Like the latter demo, this demo uses the ArduCam to perform image classification. However, here we train a custom classifier using transfer learning and model distillation in Keras instead of tf-slim.

Training Droop, There It Is

Training an image classifier tiny enough to fit on the Arduino but large enough to maintain sufficient accuracy is a constrained optimization challenge. Fortunately, knowledge distillation offers a principled approach to training of tiny models.

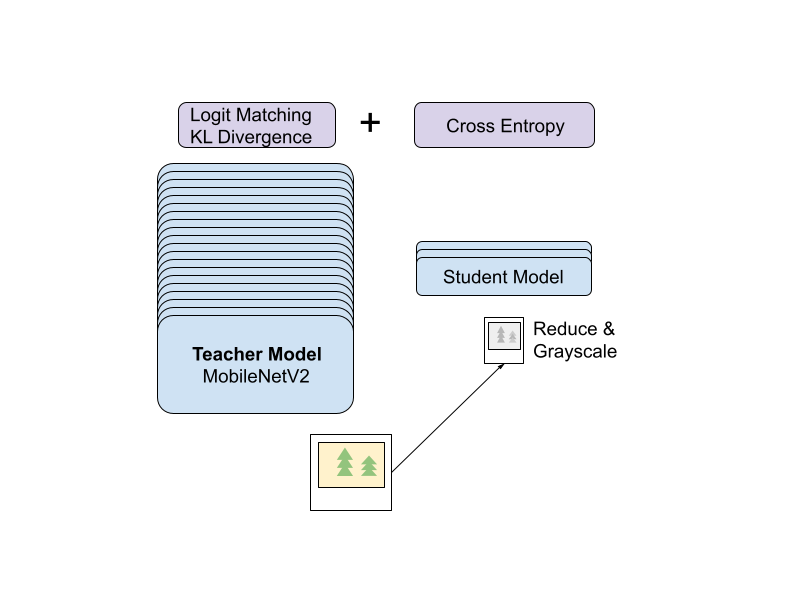

In its simplest form, KD enforces a student model’s logits to match those of a more powerful teacher model. This is accomplished by perturbing the standard categorical cross entropy loss with an additional term: the KL-divergence between logits.

In practice, a temperature parameter is incorporated to soften these distributions, helping correct for over-confident teacher predictions. The survey linked above cites Yuan et al in interpreting KD as an adaptive generalization of label-smoothing.

Logit matching may also offer a mechanism to inject prior information which can benefit training small models. But importantly, less-accurate albeit well-calibrated models tend to make better teachers than over-confident but high accuracy teachers.

Furthermore, teacher model confidence and calibration offers instance-level influence on gradient updates during training the final model.

Considering these findings, we chose a MobileNetV2 base model pre-trained on imagenet to start fine-tuning a teacher model on our roughly balanced collection of 6K images sourced from search and a short crawl.

Making the most of our image collection, we employ standard image augmentation methods. In summary, we applied simple photometric distortions (hue, rotation, horizontal flip) at random after triplicating the training data.

Adding a small dense layer, we kept the count of trainable parameters under 200K to fine-tune our teacher model for up to 20 epochs with early stopping (patience=3).

Next, we adapted a nice keras KD example by probing temperature and alpha parameter combinations, aiming to keep the two summands in the loss on a comparable scale. Ultimately, we found alpha=0.1 and temperature=1 to work well.

Our student model used a very simple CNN architecture after transforming input into 32x32 grayscale images for a model with fewer than 7K parameters! Ultimately, we achieved a nearly 25X reduction in trainable parameter count at a cost of merely 5% reduced accuracy before quantization! Of course, this undercounts millions of frozen parameters in the teacher’s MobileNetV2 base model which can’t fit on the device!

While far from displacing a gardener’s reasoning, well-understood optimizations around data curation and model improvements may lead to a robust, context-aware irrigation switch.

Droop, There It Is Demo Dumps the Pumps

The original droop demo controlled peristaltic pumps, informed by computer vision inferences to optimize for water conservation. The Arduino BLE runs on a tiny battery and is meant for low-power consumption so we eliminate the pumps.

With this new hardware configuration, we instead use the Arduino to signal the need for irrigation through BLE, essentially voicing the plant’s need for water and triggering an irrigation event.

Conclusion

Smart water conservation is a fundamental concern for a growing population. As the economics of water usage and compute resources continue to shift, we anticipate a convergence in agtech innovations around optimizing water consumption.

Perhaps there is a place for highly specialized sensors capable of introducing plant stress signals to optimize water and nutrient delivery. We hope this project gives you food for thought around innovations in water conservation, agtech or otherwise.