In recent experiments, we’ve generated high quality reconstructions of our apartment from video.

Learning the failure modes of these methods, you will move the camera smoothly, avoid bright lights, and focus on textured regions of the FOV.

If it all works out, you might spend more time recording video than processing it! Automating the data collection can really reduce the cost of mapping and reconstruction.

Compared to recording from a phone/tablet, drones move more smoothly and swiftly. At the same time, drones make it easier to set and vary the camera perspective.

The main limitation for drones might be battery life. To make the best use of the limited power resources, it is important to keep it moving while collecting data. But this frames another important challenge, obstacle avoidance and control.

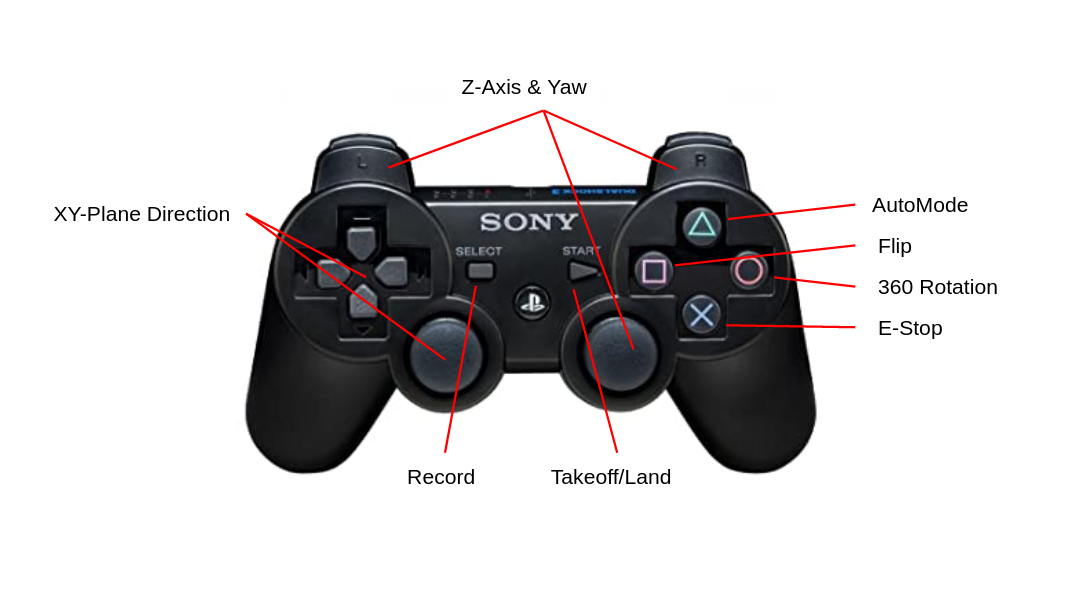

We recently purchased the DJI Tello EDU Edition. Using the SDK, you can control the drone using the keyboard with pygame, which means we can fly with our PS3 controller.

We find preconfigured maneuvers like flips and rotations will block the camera stream but in addition to images, we can stream IMU data and altitude to help estimate distance and scale.

Now that our camera is flying, we wanted to avoid collisions so we incorporate depth estimation using MiDaS, which is accelerated by a USB Coral Dev stick.

We also added a detector to help select targets to track or follow, like we did with our turtlebot.

MiDaS for relative depth estimation tends to oversmooth at discontinuities and underestimate distance near the edges of the frame. There is impressive work to improve monocular depth estimation using boosting but this technique is not fast enough for our application.

Our drone’s perception could benefit from additional semantic and geometric constraints.

Apartment spaces typically feature open spaces with planar surfaces so we experimented with the sparsePlanes demo to get a sense for how plane-fitting might help.

With some reductions and hardware acceleration, it may be possible to run this model in real-time. Though both plane fitting and monocular depth estimation have shortcomings, they seem reliable in finding the deepest region of the image plane.

This inspires a navigation strategy: orient the camera toward the deepest part of the FOV. Assuming well-paced forward motion, this simple behavior can be effective in obstacle avoidance while fathoming an unmapped space.

Putting our drone’s yaw under PID control, we orient the drone toward the deepest part of the image plane. In this way, we can use the smoothness bias of MiDaS’s depth estimates to guide us toward safety with depth-first exploration.

Since we want to make our drone capable of navigating unseen spaces, having the abililty to generate a map in real-time is important, so we experimented with DROID-SLAM.

We also evaluated an optimization-based monocular VSLAM method: PL-VINS. This lightweight method uses both point and line features with an implementation of line segment detection streamlined for the pose estimation use case.

With some of these fundamentals, we can help our drone quickly scan a space before the battery dies. We can monitor battery levels and develop an emergency landing behavior before it reaches 9%.

Using this platform, we can scale up to scanning larger spaces with a swarm!

With a low barrier for entry, the Tello drone made it easier to consider in-flight video capture for reconstruction. Automating the data collection process can both reduce the need for humans to carefully capture a space and increase the overall quality of the produced reconstruction.