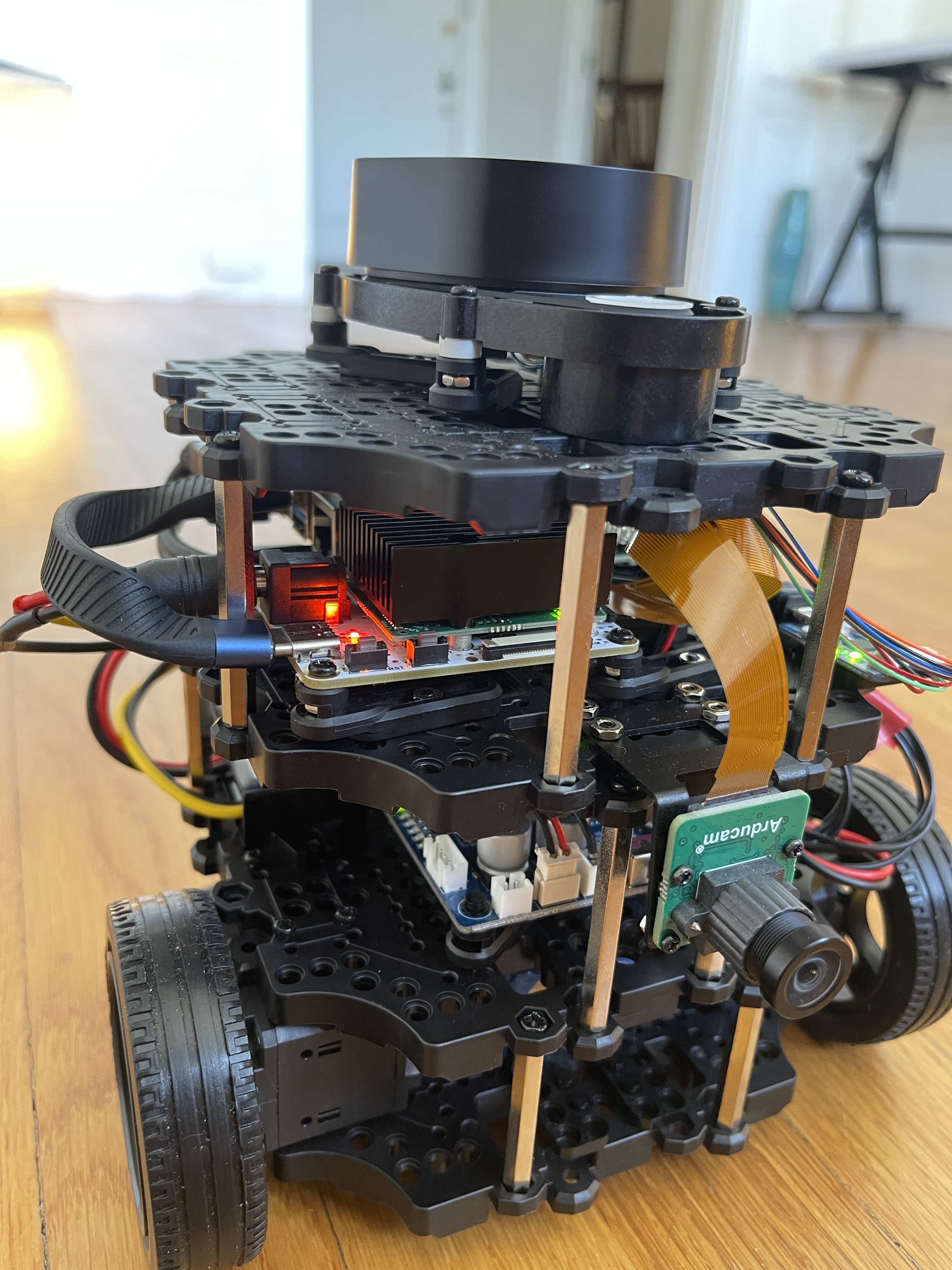

Turtlebot open-sourced designs for many low-cost, personal robot kits and the Burger is a great platform to learn ROS and mobile robotics.

The documentation progresses from setup to more advanced robot behavior including SLAM, navigation, autonomous driving and more!

Our Build

Since the turtlebot3 burger kit doesn’t include a camera, we added the OAK-FFC-3P camera made by Luxonis with a 100 degree Arducam to our build. This device includes deep learning accelerators on the camera so we can reduce the compute needs on the raspberry pi host. Check out the Depthai ROS examples.

While you can power the OAK camera directly from the USB3 connection to your laptop, we found it best to step down the voltage coming from the OpenCR board to correctly supplement the power directly from the battery.

We also found using the kit’s controller easier to use than keyboard navigation while bypassing additional compute for the Pi by directly communicating to the OpenCR module instead.

In Action

We tested teleoperation first by starting the basic bringup routine and connecting the controller via bluetooth.

The camera feed helps in teleoperating our bot, so we modified the rgb_publisher node from the depthai-ros-examples repo to run additional nodes for object detection & tracking with monocular depth estimation on the OAK device.

Without reducing framerates, we found I/O bottlenecks in streaming RGB images, causing a pipeline to crash. After visualizing the image stream, we launched the SLAM node to produce a map of our space.

The SLAM workload is heavy for a raspi so we run this node on our ROS master desktop.

After successfully mapping our apartment using lidar and odometric data, we tried navigation!

Follow Me

With FastDepth for monocular depth estimation along with detection and tracking, our turtlebot can make inference about its spatial relationship to objects in the field of view. This can be applied to enable our robot to follow us by visual servoing.

In essence, we create a ROS publisher/subscriber which uses PID control to update messages sent to the /cmd_vel topic based on the estimated distance between the robot and tracked person.

Since our robot is constrained to move in a 2 dimensional plane, we use two controllers for angular and linear motion. First, we tuned the controller for angular motion before determining coefficients for the linear motion controller.

Up Next

Stay tuned to see what we do with our turtlebot next!