In our last post, we trained StyleGAN2 over a corpus of hundreds of thousands theatrical posters we scraped from sites like IMDb.

Then we explored image retrieval applications of StyleGAN2 after extracting embeddings by projecting our image corpus onto the learned latent factor space.

Image retrieval techniques can form the basis of personalized image recommendations as we use content similarity to generate new recommendations.

Netflix engineers posted about testing the impact on user engagement from artwork produced by their content creation team.

Without a design team, we seek algorithmic methods to generate variants. Many of our variants are merely near-duplicates, differing only by aspect ratio or otherwise minor details.

In this post, we apply generative models to create content for producing localized image variants for recommendations.

Localization

Before considering fully personalized image recommendation, we can explore regional variants through localization.

Here, “localizing” image content means replacing the text-based title overlays of an image with one rendered in the regional lingua franca.

Since we scraped our corpus, we lack the original creatives and must instead apply some image edits.

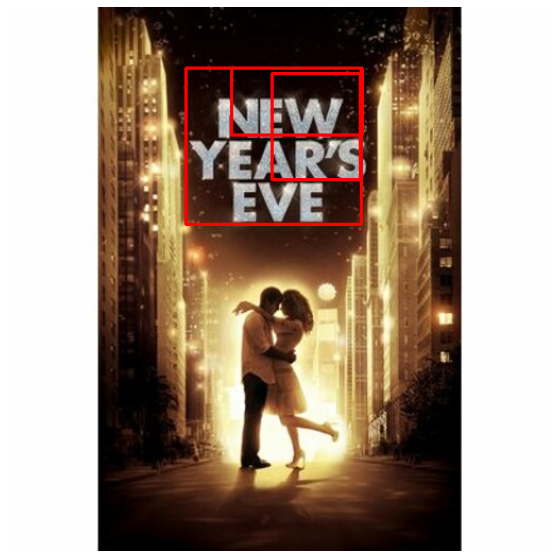

Specifically, we want to mask out the region of the theatrical poster with the title text. Then we want to insert the new localized title variant.

After masking out the title, we must blend backgrounds to maintain similar production quality for our poster.

While simple enough for a few posters, scaling this work for dozens of language variants over a catalogue of thousands or more items with multiple images variants is infeasible.

The prohibitive cost of hiring a design team along with the lack of variants can limit greater image personalization, so we want to automate this as much as possible.

Our ML Application

We can decompose our goal of poster localization into the following steps:

- Identify regions of interest containing title text

- Mask out these regions

- Apply image inpainting

- Add new localized overlay

Text Detection

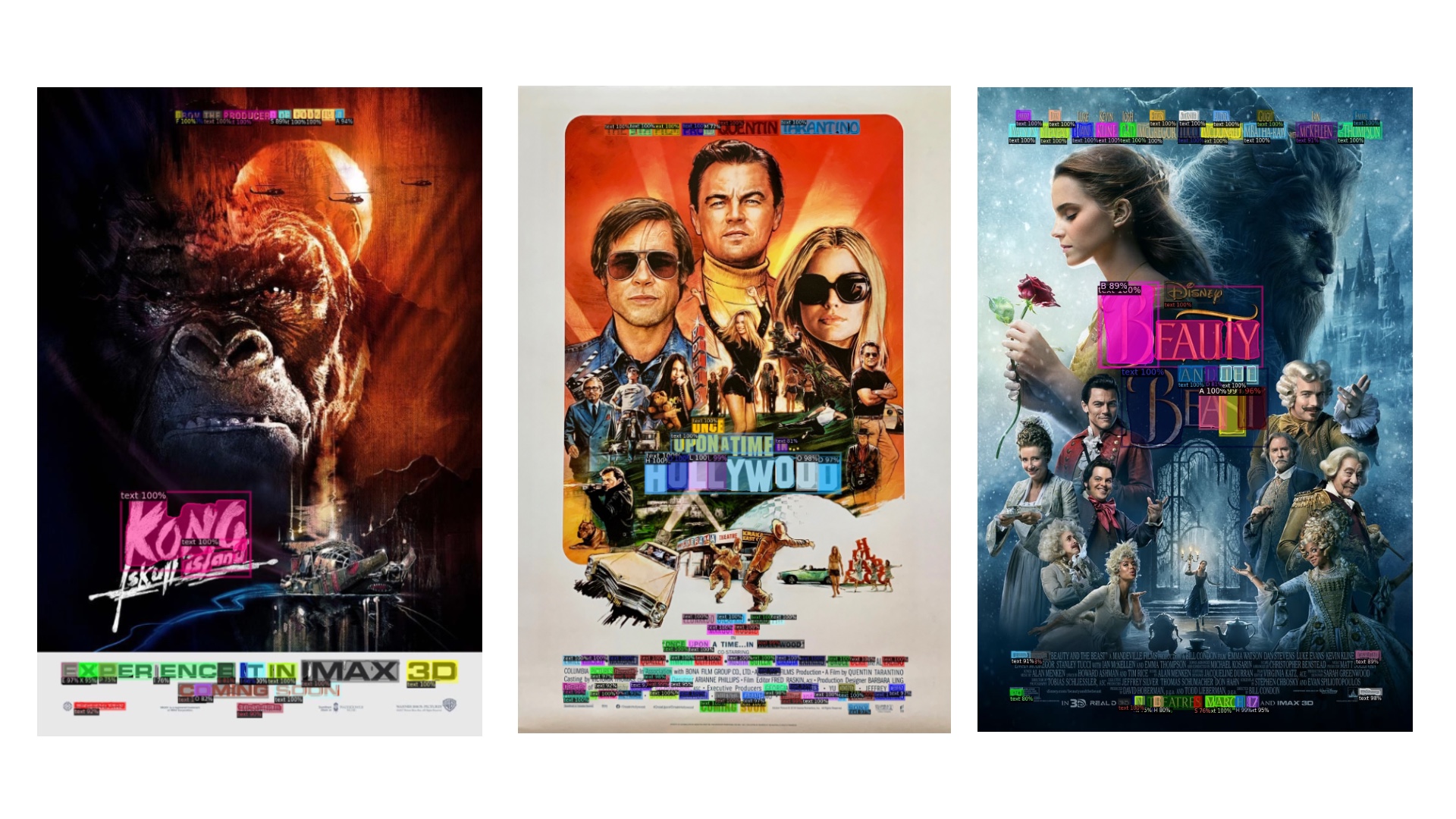

To detect titles, we tested text detection models like EAST, but theatrical posters can be complex compositions so we look for newer, more accurate models.

Surveying scene text detection on paperswithcode, we found TextFuseNet to be a powerful and fast detector for image compositions.

After detection, masking is straightforward and sets us up for the crux of this problem, blending the new overlay into the original image.

Image Inpainting

Upon annihilating the image regions containing text with a mask, we apply image inpainting to approximate the original background image.

Turning again to paperswithcode, we find SOTA references in the domain of image inpainting dominated by GANs.

In particular, we found the recent work Bayesian Image Reconstruction using Generative Models (BRGM).

This model uses the StyleGAN2 latent factors we learned before as priors in a Bayesian model and the results are quite compelling.

Conclusion

After learning StyleGAN2 representations of our poster corpus, we can support image personalization by generating greater variation in our content with automated image editing techniques.

Image editing tools aided with data-driven priors can enable large scalable design workloads by nonexperts.