In applied machine learning, engineers may spend considerable effort optimizing model performance with respect to metrics like accuracy on hold-out data. At times, more nuanced engineering decisions may weigh additional factors, such as latency or algorithmic simplicity.

Across many problem domains, approximate, algorithmic solutions are preferred to more accurate techniques with poor scalability. It’s said that “what’s past is prologue”, an idea which manifests in the most foundational of problem solving methods: use prior information.

At times, we want to discover novelty or anomaly by cross referencing new samples against historical instances. Doing so without paying a cost linear in compute or memory is where things get interesting.

In this post, we consider data sketching, which uses many algorithmic techniques common to ML to support approximate query processing (AQP).

What’s in a Sketch?

Data Sketching is concerned with the efficient analysis of massive and/or streaming datasets. For instance, how would you spot a port scan amidst a large volume of network traffic in real-time?

Due to the scale of the data, efficient often means sublinear complexity. For many modern challenges like streaming data, sketching offers the only known approach, so what is sketching?

To summarize, “data sketching” references a class of stochastic algorithms trading accuracy for speed, endowed with error bounds guaranteed by probablistic estimates. For a motivating example, consider the Bloom filter a basic archetype.

Bloom filters help to efficiently evaluate set membership over large datasets. Using multiple hashing functions, we can encode set membership into a binary array with a single pass over the data.

Later, we fingerprint a query with hash functions and lookup bits indexed in our array, taking the min to determine with certainty if we have never seen the element before. On the other hand, smaller sketches lead to hash collisions and so our parameter choices in this scheme directly influences the false positive rate.

This simple probablistic data structure powers efficient indexing of massive data while suggesting a template to consider similar queries. Simply by replacing bits with integers, we can increment counters to estimate frequency distribution with the CountMin sketch. More generally, sketching entails a dataset compression scheme tailored to answer certain queries.

At a high level, we:

- initialize an approximation

- observe data instances and increment our approximation

- retrieve query results

Concerning our approximation, these techniques start by identifying an (hopefully unbiased) estimator for a quantity of interest. At times, methods use boosting with ensembles to reduce variance. Tail bounds like Markov’s or Chebyshev’s help to ensure control over error.

Another representative scheme, invented at Bell labs in the 70’s by Robert Morris, offers approximate counting. While an exact answer requires $$O(log n)$$ bits, we can reduce complexity by orders of magnitude with approximation.

Important entities in a datasets like popular or trending topics are simply frequent items (over some time range) so we can use sketches designed to identify the “heavy hitters”.

In large systems, performance can be dominated by tail effects prompting groups like Datadog to develop quantile sketches. Alternatively, KLL has better theoretical guarantees with an implemention in an Apache project.

Perhaps you are thinking we should simply sample our dataset. However, it isn’t too hard to find a failure mode for this approach. Consider the challenge of counting distinct elements in a multiset. Here, hyperloglog helps where sampling would fail for many practical instances.

Another industrial application concerns disaggregating ad impression counts by demographic factors. Far from limited to keeping counts, sketching techniques can also be applied to differential privacy!

Johnson-Lindenstrauss lemma helps us to sketch pairwise distance computations in large, high dimensional datasets. Dotting your data with random normal matrices, we can drop dimensions while maintaining an unbiased estimate of squared euclidean distance. Cauchy-Schwarz helps relate L2 norms to inner product so we can extend our sketch to approximate the dot product.

Locality Sensitive Hashing (LSH) is a related idea which helps us to quantize an embedding space, some use this to to scale & distribute distance computations after partitioning by bucket id. Sketching techniques like embedding and sampling can even be applied to compute matrix multiplications or traces.

Implicit trace estimation has applications in efficiently computing matrix norms and spectral densities with randomized numerical linear algebra. Even fundamental techniques like linear regression can be further reduced with subspace embeddings and streaming PCA can be realized as a “frequent directions” problem.

Streaming Eigenfaces

In the deepfake detection challenge, many contestants sampled or skipped frames from video to satisfy the contest’s performance constraint. Aiming to measure temporal inconsistency, we’ve explored different modeling strategies but have been unable to run inference on each frame. However, many faceswaps can appear intermittently as the quality of deepfakes varies.

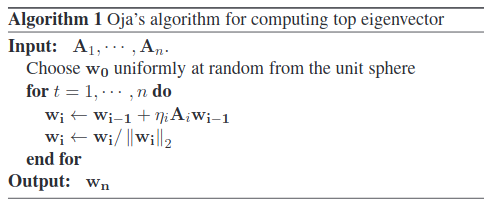

Using Oja’s method, we try applying streaming PCA for eigenfaces on tracked faces.

This model is much faster, parallelizable, and specialized to finding modes in face datasets. Understanding the modes, we look for large deviations betraying the faceswap.

We consider simple ideas to featurize top-K eigenvectors for a classifier or thresholding out-of-band reconstruction error in top-K eigenface projection. In the context of deepfake detection, we expect eigenbasis weights for faceswapped video to feature greater variance over time.

Investigating further, we find similar motivations behind fast motion detection techniques, which also have implementations in skcuda’s randomized linear algebra routines.

As the scale of training datasets increases, we are excited for fast, one-pass algorithms. Many complex video analytics workloads can be made more efficient by first extracting a cheap summary to reduce the effective search space & bandwidth. If these ideas interest you, check out how the research is trending here or here or here.